Benchmark SUPER mode of NVIDIA Jetson Orin NX

In 2025, AI is undoubtedly the hottest topic today, and there are many application scenarios that require localized deployment, such as smart surveillance systems, intelligent retail stores, and small-scale robots with LLM/VLM.

The NVIDIA Jetson Orin NX is a compact, high-performance AI computing module designed for edge applications such as robotics, smart cameras, and industrial automation. It delivers up to 157 TOPS of AI performance using the NVIDIA Ampere architecture, making it ideal for running complex AI models locally with low latency and high efficiency.

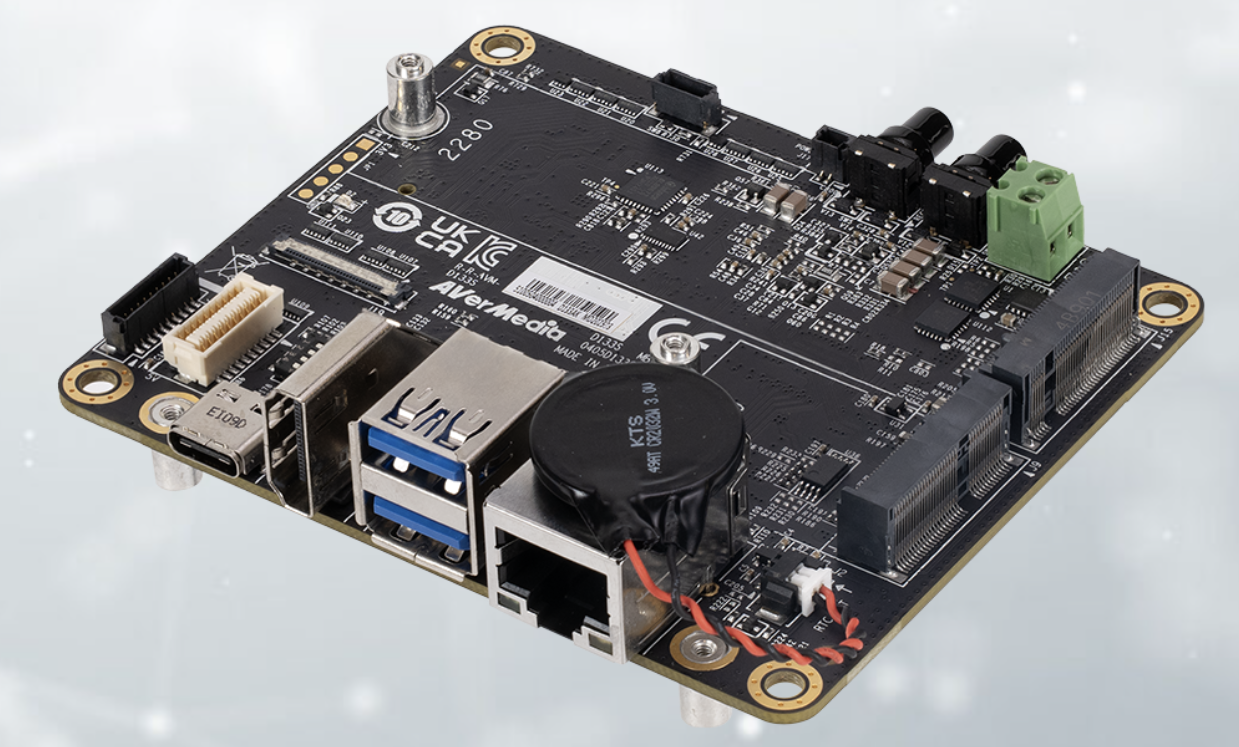

To unlock its full potential, the AVerMedia D133S Carrier Board provides a robust and versatile platform tailored for the Orin NX. It supports super mode for enhanced performance and offers a rich set of I/O.

Here, we are going to introduce and benchmark these two powerful standard carrier boards(D133 and D133S) from AVerMedia, which offer rich I/O options such as camera inputs, multiple Ethernet ports and a GPU, making them especially suitable for AI edge computing applications.

D133 and D133S from AVerMedia

The following table highlights the differences in main features between the D133 and D133S.

| Device | D133 | D133S |

|---|---|---|

| Platform Compatibility | NVIDIA® Jetson Orin NX | NVIDIA® Jetson Orin NX |

| Super Mode Support | No | Yes |

| OOB Functionality | Not available | 1 x OOB (Optional, for power cycling and cloud serial console) |

| 5G Daughter Board | Not available | 1 x 5G daughter board (Optional, supports 4G/5G functions) |

Power Mode

The D133S supports a new power mode that utilizes a higher 40W reference power, as well as a MAXN SUPER mode.

| Device | Power Mode |

|---|---|

| D133 | 10W, 15W, 25W, MAXN |

| D133S | 10W, 15W, 25W, 40W, MAXN SUPER |

Suitable Scenarios

For D133

- Academic Development: Ideal for academic research, AI model development, and prototyping.

- Basic Edge Computing: For tasks like smart cameras, simple object detection, or image processing.

- Cost-Sensitive Projects: Great for startups or small businesses buildings.

For D133S

- Smart City & Industrial Applications: Such as traffic monitoring, smart lighting, or factory equipment supervision.

- Remote Management Needs: OOB functionality enables remote reboot and diagnostics, perfect for unattended environments.

- High-Performance Edge AI: Super Mode supports demanding AI inference tasks.

- 5G-Enabled Deployments: Suitable for mobile systems, in-vehicle platforms, or remote video streaming.

Info

We provide a Quick Start Guide for VLM Development with D133S series.

Key Advantages of VLM Over LLM

-

Real-World Contextual Awareness

VLM can "see" the world, giving them a more grounded understanding of real-world situations.

Identifying objects in photos, detecting emotions in facial expressions, reading product labels or signs.

-

Better Performance in Tasks Requiring Visual Context

VLM outperform LLM in tasks where visual input is crucial.

Diagnosing issues from a photo, interpreting data from a graph or table image, OCR + comprehension.

-

Real-World Applications

- Medical imaging analysis

- Retail (visual search, product tagging)

- Accessibility tools (describing images for visually impaired users)

- Autonomous driving (object detection + decision-making)

VLM Benchmark

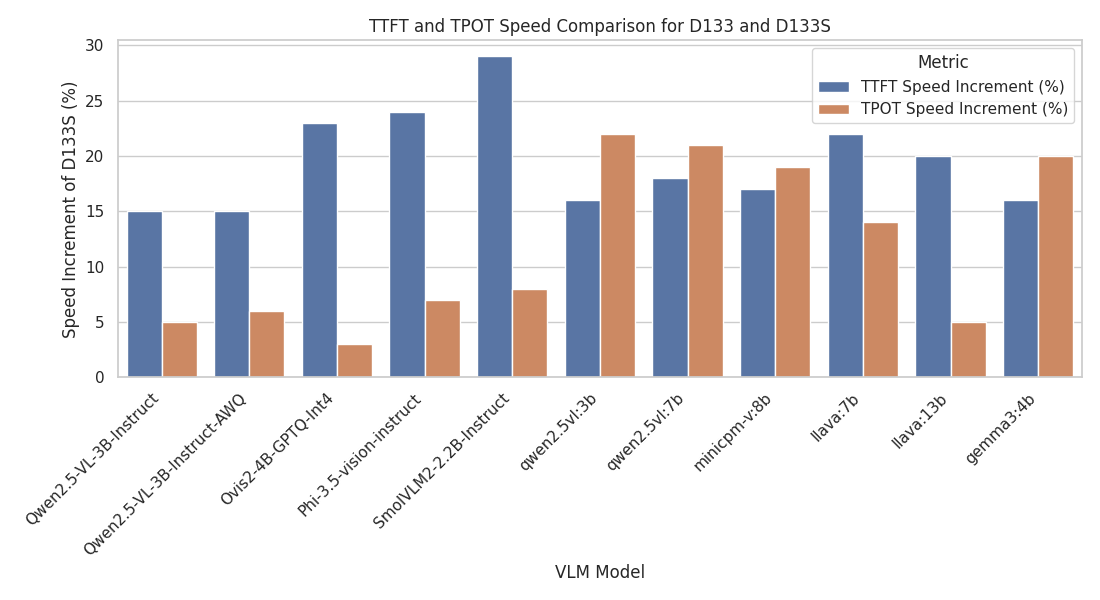

From the key advantages, we can know that VLM can indeed offer more advantages than LLM. Additionally, there is a lack of information regarding the performance comparison of VLM on the D133 and D133S. Therefore, we will compare the performance differences of various VLM models on the d133s and d133.

The following table compares the computational performance of D133 and D133S, representing normal mode(MAXN) and super mode(MAXN SUPER) respectively.

It is evident from the table that both TTFT and TPOT show significant speed improvements in D133S.

In particular, the improvement in TTFT is especially notable, indicating that D133S offers greater potential for accelerating AI model inference compared to D133.

We used the open dataset MMStar to measure the average values of TTFT and TPOT. At the same time, it also allows us to evaluate the accuracy performance of various VLM.

| VLM Model | Framework | TTFT Speed | TPOT Speed | MMStar | TTFT(s) D133 | TTFT(s) D133S | TPOT(s) D133 | TPOT(s) D133S |

|---|---|---|---|---|---|---|---|---|

| Qwen/Qwen2.5-VL-3B-Instruct | vLLM | +15% | +5% | 0.539 | 0.69 | 0.599 | 0.084 | 0.08 |

| Qwen/Qwen2.5-VL-3B-Instruct-AWQ | vLLM | +15% | +6% | 0.525 | 0.544 | 0.471 | 0.034 | 0.032 |

| AIDC-AI/Ovis2-4B-GPTQ-Int4 | vLLM | +23% | +3% | 0.609 | 1.68 | 1.367 | 0.034 | 0.033 |

| microsoft/Phi-3.5-vision-instruct | vLLM | +24% | +7% | 0.467 | 1.33 | 1.075 | 0.111 | 0.104 |

| HuggingFaceTB/SmolVLM2-2.2B-Instruct | vLLM | +29% | +8% | 0.447 | 2.202 | 1.709 | 0.056 | 0.052 |

| qwen2.5vl:3b | Ollama | +16% | +22% | 0.509 | 2.119 | 1.82 | 0.062 | 0.051 |

| qwen2.5vl:7b | Ollama | +18% | +21% | 0.577 | 2.652 | 2.249 | 0.099 | 0.082 |

| minicpm-v:8b | Ollama | +17% | +19% | 0.477 | 1.98 | 1.688 | 0.082 | 0.069 |

| llava:7b | Ollama | +22% | +14% | 0.317 | 2.497 | 2.043 | 0.079 | 0.069 |

| llava:13b | Ollama | +20% | +5% | 0.364 | 4.31 | 3.578 | 0.166 | 0.158 |

| gemma3:4b | Ollama | +16% | +20% | 0.384 | 5.407 | 4.655 | 0.071 | 0.059 |

Info

MMStar is a multi-modal benchmark designed to evaluate the capabilities of Large Vision-Language Model (VLM). You can refer to Lin-Chen/MMStar for more details.

More competitive choice for AI deployment.

Increased computation capability

- D133S can now provide higher power mode that can increase more AI inference speed.

Enhanced mobility and portability

- D133S can support OOB and 4G/5G communication that especially for mobile and remote application.